- #MOTION BLEND MOTIONBUILDER 2014 UPDATE#

- #MOTION BLEND MOTIONBUILDER 2014 DRIVER#

- #MOTION BLEND MOTIONBUILDER 2014 CODE#

#MOTION BLEND MOTIONBUILDER 2014 DRIVER#

Developer Jasper Brekelmans is a key driver the democratisation of mocap, with a series of affordable Brekel tools that work in conjunction with Microsoft's Kinect or the Leap Motion gesture-recognition hardware: details Once the domain of very high-end studios and bespoke outfits, motion capture continues to tumble in cost, to the point where there are now free, if somewhat basic, open source solutions. IPiSoft produces a number of markerless systems which work with the Kinect and PlayStation Eye If you look at things like GRID from Nvidia, you can see the infrastructure is in place to go crazy with this – again it's just about having a modern toolset that can take advantage of it." 04. This becomes viable once you separate data and the processing of that data, which is happening more and more in VFX tools.Ĭombined with streamed applications this can start looking very interesting indeed – for example, on-demand offloading of simulations that is completely transparent to the artist. "I think the next phase is going to be distributed computation across GPU clusters," he adds, "particularly on the cloud. GPU compute can be a big enabler once it is just treated as a readily available compute resource.

"The reason we're excited about what we've done with GPU compute is that it's now accessible to regular TDs," explains Paul Doyle, CEO and Founder of Fabric Software, "and that there is zero cost experimentation to go with it. The end result is apps or plug-ins that enable animators to work with faster feedback and iterate more quickly – no more guessing what the end result might look like. In most instances, technical directors can get an instant 5x to 10x speed-up, just by flicking the switch to use available GPUs.

#MOTION BLEND MOTIONBUILDER 2014 CODE#

The key element in the new release is the ability to write Fabric code that can run on both the CPU and GPU, without making changes or having any previous knowledge of CUDA or OpenCL.

#MOTION BLEND MOTIONBUILDER 2014 UPDATE#

Expect the tech to appear across other digital content creation apps in due course.įabric Software is just about to update Fabric Engine, a development system that enables studios to build their own custom tools. The app also supports real-time character deformation with subdivision surfaces and tessellation, but this technology isn't just for high-end bespoke apps: Maya 2105 already features GPU-accelerated OpenSubdiv, and it's due to be implemented in Blender 2.72, enabling much faster previews of animated characters. But the power of the GPU is also being applied to things like Pixar's real-time preview system, Presto.ĭeveloped for the film Brave, this proprietary software enables an animator to view their animation in real-time, enabling them to work faster and more effectively.ĭriven by a £4,000 Nvidia Quadro K6000 card, the system leverages the power of the GPU to drive an OpenGL 4.0 system capable of displaying fully animatable characters with tens of millions of poseable hairs with PTex textures and real-time shadows. It's not just the rendering pipeline that's seeing the benefits of GPU acceleration already being employed elsewhere in computationally heavy tasks from physics and fluid simulations to filters in Adobe Photoshop. PIxar demoed Presto at NVIDIA's GTC conference The system is driven using a Boxx workstation, fitted with a Quadro K6000 and two Tesla K40s - around £17,000-worth of gear. Although the live image is a little coarse, the system can be paused at any time and the system rapidly resolves to show the near-final output, including motion blur and depth of field.

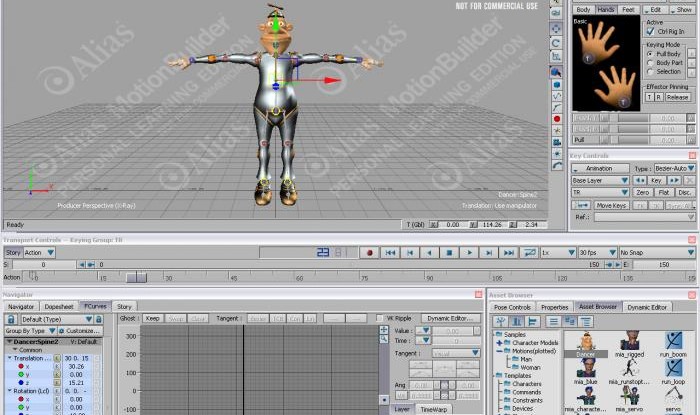

Kevin Margo of Blur Studio recently demonstrated a combination of motion capture hardware with V-Ray RT for MotionBuilder, providing an almost-real-time view of the CG characters. The ultimate goal is to refine this to the point where previs footage is near or actual final quality. The concept was advanced during the shooting of James Cameron's Avatar, where a 'virtual camera' enabled a videogame-quality version of the CG characters and environments to be viewed in real-time, enabling Cameron to frame his shots. A key element in the creation of visual effects sequences pre-visualisation is a way for the director to see a version of the scene complete with basic effects in place. Previs was seriously advanced during the making of AvatarĪ side effect of GPU-accelerated rendering is in previs.